Introduction

Artificial Intelligence (hereinafter referred to as AI) is rapidly reshaping the cybersecurity landscape. As organizations strive to keep up with increasingly sophisticated cyber threats, they turn to AI to gain an edge. Yet this reliance on it brings new risks: data privacy violations or lack of algorithmic transparency. In highly regulated sectors, these risks can quickly evolve into compliance breaches with significant legal consequences. Is AI truly the cybersecurity professional’s best friend – or could it become a compliance nightmare? This blog post explores how organizations can harness the power of AI responsibly and compliantly within a cybersecurity context.

Current Situation Overview

In 2024, 13.48% of enterprises in the European Union (EU) with 10 or more employees or self-employed professionals used at least one form of AI, including technologies such as text mining, speech recognition, understanding/generating text (Large Language Models – LLM), image recognition, machine learning, robotic process automation, and autonomous systems capable of decision-making. By 2025, generative AI (GenAI) tools such as ChatGPT and Microsoft Copilot have become widely adopted by enterprises across various sectors, integrated into everyday workflows to assist with writing, coding, summarizing, data analysis, and decision support. This surge in usage has brought significant productivity gains but also raised concerns around information security, cybersecurity. From a compliance point of view many organizations face challenges in ensuring that GenAI tools are used in line with internal policies and external regulations, particularly regarding the handling of sensitive or classified information. Without proper controls, employees may unknowingly expose confidential data to external AI models, leading to potential breaches.

To mitigate these risks, enterprises must enforce strict information classification controls, clearly defining what data can be processed by GenAI tools and under what conditions. Additionally, organizations must align GenAI usage with frameworks like the EU AI Act, which emphasizes transparency and human oversight for high-risk applications.

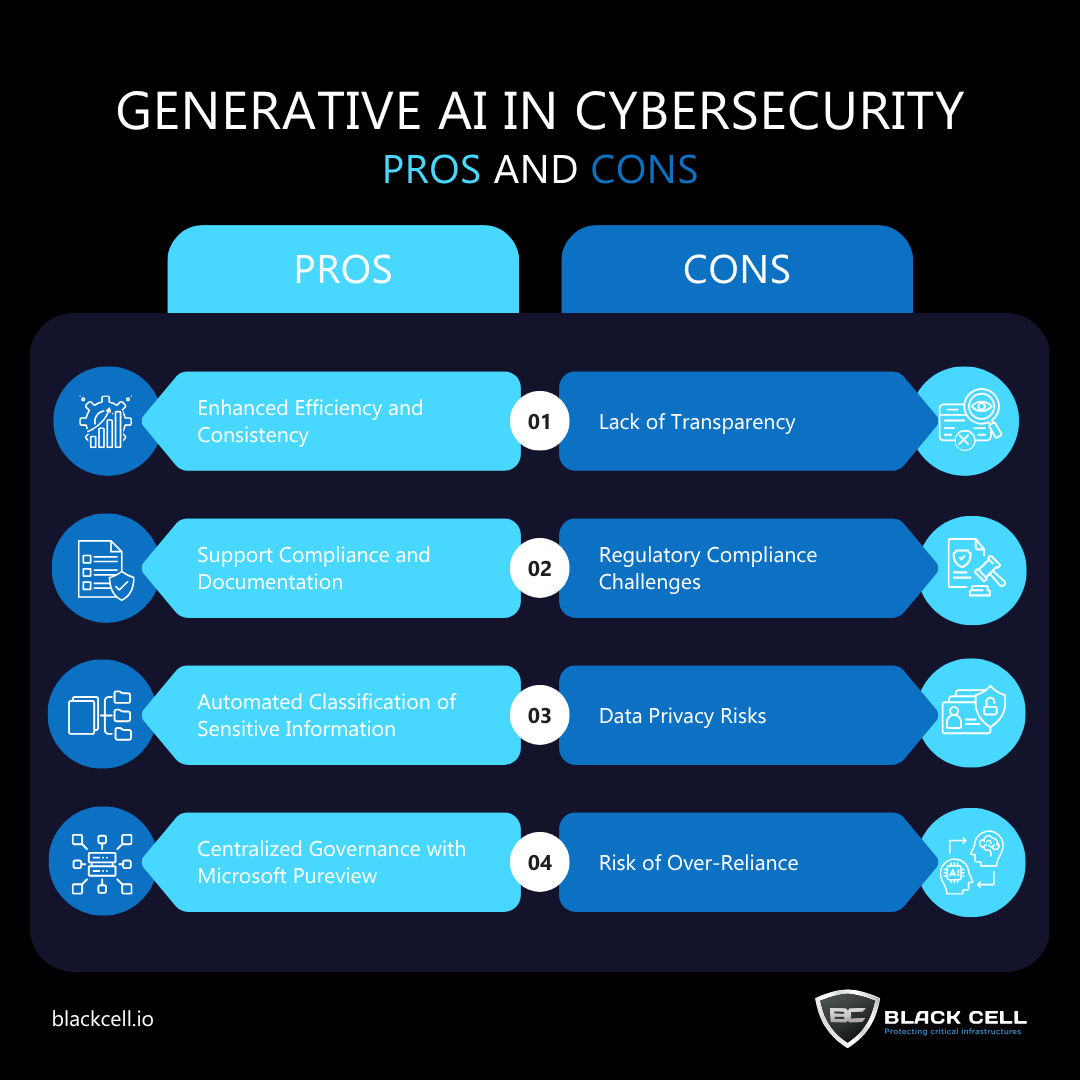

Main advantages and disadvantages of GenAI in Cybersecurity (not an exhaustive list)

In the face of increasingly complex and persistent cyber threats, generative AI is emerging as a powerful tool for cybersecurity professionals and end-users alike. Tools like ChatGPT and Microsoft Copilot are being integrated into daily workflows to assist with tasks such as drafting incident reports, summarizing threat intelligence, generating policy documentation, and even simulating phishing emails for awareness training. These capabilities enhance both efficiency and consistency, especially in environments where rapid response and clear communication are crucial. Generative AI also supports compliance efforts by helping users interpret regulatory texts, generate audit-ready documentation, and maintain up-to-date records aligned with frameworks like ISO/IEC 27001:2022, NIS2, and GDPR. One of the most impactful uses is in the automated classification and labelling of sensitive information, which helps ensure that data is handled according to its confidentiality level.

Microsoft Purview presents a strategic opportunity for organizations to adopt generative AI tools like Microsoft Copilot, ChatGPT Enterprise, and other AI agents with confidence and control. By leveraging Purview’s Data Security Posture Management for AI, companies can implement robust protection and governance measures that align with their data security and compliance goals. It enables centralized visibility and policy enforcement across a wide range of AI applications – whether they are Microsoft-native Copilot experiences or third-party enterprise AI tools integrated via Entra and/or browser activity. This unified approach helps organizations accelerate AI adoption while ensuring sensitive data is properly classified, monitored, and protected.

Despite its transformative potential, generative AI introduces several challenges that cybersecurity teams must address proactively. One of the most pressing concerns is the lack of transparency in how GenAI models generate outputs, often functioning as “black boxes” with limited explainability – an issue that undermines trust and accountability in security-critical environments. When end-users rely heavily on GenAI tools to assist with threat analysis, policy drafting, or incident response, it becomes difficult to trace the logic behind decisions or recommendations, which can complicate audits and forensic investigations, as these tools do not provide a transparent or reproducible decision path that investigators can review or validate. Additionally, GenAI tools are highly data-dependent, raising immediate concerns under regulations such as GDPR, especially when prompts or outputs involve personal or sensitive information. Organizations must ensure that GenAI usage adheres to privacy-by-design principles and is supported by thorough Data Protection Impact Assessments (DPIAs). Emerging regulations such as the EU AI Act will further tighten requirements, particularly for high-risk applications that interact with infrastructure, access control, or personal data. In these cases, enterprises must demonstrate robust governance, including documentation, monitoring, human oversight, and risk mitigation strategies. Without these safeguards, the convenience and efficiency of GenAI could come at the cost of compliance and security, making it essential to balance innovation with responsible use.

AI usage in the organization: Practical Do’s and Don’ts

Successfully integrating generative AI into cybersecurity operations requires more than technical expertise – it demands a structured, compliance-driven approach rooted in governance, awareness, and ethical standards. Before deploying GenAI tools, organizations must first analyse applicable legal and regulatory requirements, including established data protection laws and sector-specific standards, as well as evolving frameworks like the EU AI Act. This foundational step should result in a clear collection of obligations that inform all subsequent decisions. Based on this, organizations must develop internal policies, provide usage guidelines for employees, and establish third-party management protocols to ensure responsible and secure use of GenAI. These tools should not be treated as plug-and-play solutions, instead, they must be evaluated for how they handle prompts, process data, and generate outputs – an especially when those outputs influence security decisions. Transparency reports, prompt logs, and usage documentation are essential to support future audits and investigations. Data governance policies should also define how sensitive information is classified and protected, ensuring that GenAI tools do not inadvertently process or expose confidential data. Regular reviews are necessary to ensure that GenAI usage remains aligned with current legal, operational, and ethical standards. Crucially, raising awareness among end-users is key: employees must understand both the capabilities and limitations of GenAI, and how to use it securely and compliantly. By following this arc – from requirement analysis to policy development, implementation, and continuous oversight – organizations can unlock the strategic benefits of GenAI while maintaining trust, accountability, and regulatory alignment.

On the “don’ts” side, organizations should avoid integrating generative AI into sensitive security workflows without first conducting a comprehensive impact and compliance assessment. The core issue is that GenAI tools – while powerful – are still IT systems, often operated remotely by third-party providers, and frequently embedded into SaaS platforms or productivity suites before security and compliance teams can fully evaluate them. This creates a real risk: sensitive information may be unknowingly shared with external systems through business AI agents, chatbots, or even security-focused copilots, bypassing traditional data protection filters. Relying solely on vendor assurances or pre-built integrations, without understanding how these tools process, store, or transmit data, is a recipe for non-compliance and potential data exposure. In addition to this, a key risk regarding to business continuity is the potential for AI-generated decisions or scenarios to be inaccurate or misleading, which can lead to poor crisis responses or misallocation of resources during disruptions. Moreover, GenAI systems are dynamic – they evolve through updates and retraining, which means their behaviour can change over time. Without ongoing performance monitoring, especially for false positives, false negatives, or unexpected outputs, organizations risk undermining the integrity of their security operations.

Conclusion

Incorporating generative AI into cybersecurity operations offers significant opportunities, but it also demands a deliberate and compliance-first approach. While tools can enhance productivity, decision-making, and reporting, they also introduce new risks related to data privacy, transparency, and regulatory alignment. Organizations must begin with a thorough analysis of legal and policy requirements, followed by clear internal guidelines, third-party management, and strong data governance. A key challenge is that GenAI tools often operate as external systems, sometimes integrated into SaaS platforms, which can lead to sensitive data being shared without proper oversight. Without clear policies, awareness, and monitoring, these tools can bypass traditional security controls and complicate audits or investigations. Ultimately, responsible GenAI adoption requires balancing innovation with accountability – ensuring that security and compliance standards evolve alongside the technology.

Strengthen your organization’s AI governance by partnering with our experts to develop policies, assess risks, and manage third-party integrations securely.

Contact with our team on [email protected]

Author

Viktória Hankó

JUNIOR INFORMATION SECURITY COMPLIANCE CONSULTANT

Related Posts

Top 4 Cyber Threats Security Leaders Feel Least Prepared For

Even the most experienced security leaders admit they’re not fully ready for every threat lurking...

Global Growth of Cybercrime

In today’s hyper-connected world, cybercrime is no longer a distant threat - it’s a looming...